Naive Bayes Exercise-程序员宅基地

技术标签: Naive Bayes Machine Learning

本文将通过朴素贝叶斯解决邮件的分类问题。理论文献参考:http://openclassroom.stanford.edu/MainFolder/DocumentPage.php?course=MachineLearning&doc=exercises/ex6/ex6.html。文章将分为三个部分,首先介绍一下基本的概率概念,然后对给出了特征的邮件进行分类,最后给出邮件特征的提取代码。

概率知识

表示在一系列事件(数据)中发生y 的概率。

表示在一系列事件(数据)中发生y 的概率。

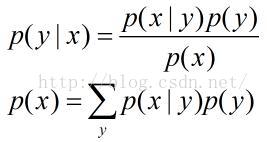

表示给定x 后,发生y 的概率。

表示给定x 后,发生y 的概率。

称之为先验概率,即不需要考虑x 的影响;

称之为先验概率,即不需要考虑x 的影响;

表示给定x 后,发生y 的概率,故称之为y 的后验概率。

表示给定x 后,发生y 的概率,故称之为y 的后验概率。

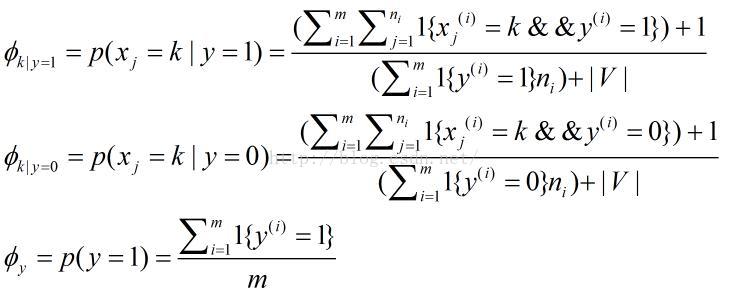

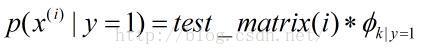

表示在一封垃圾邮件中给定单词是字典中第k个单词的概率;

表示在一封垃圾邮件中给定单词是字典中第k个单词的概率;

表示在一封非垃圾邮件中给定单词是字典中第k个单词的概率;

表示在一封非垃圾邮件中给定单词是字典中第k个单词的概率;

表示训练邮件中垃圾邮件所占概率;

表示训练邮件中垃圾邮件所占概率;

个单词,字典中包含

个单词,字典中包含

个单词。

个单词。

邮件分类

clc, clear;

% Load the features

numTrainDocs = 700;

numTokens = 2500;

M = dlmread('train-features.txt', ' ');

spmatrix = sparse(M(:,1), M(:,2), M(:,3), numTrainDocs, numTokens); % size: numTrainDocs * numTokens

% row: document numbers

% col: words in dirctionary

% element: occurrences

train_matrix = full(spmatrix);

% Load the labels for training set

train_labels = dlmread('train-labels.txt'); % i-th label corresponds to the i-th row in train_matrix

% Train

% 1. Calculate \phi_y

phi_y = sum(train_labels) ./ length(train_labels);

% 2. Calculate each \phi_k|y=1 for each dictionary word and store the all

% result in a vector

spam_index = find(1 == train_labels);

nonspam_index = find(0 == train_labels);

spam_sum = sum(train_matrix(spam_index, :));

nonspam_sum = sum(train_matrix(nonspam_index, :));

phi_k_y1 = (spam_sum + 1) ./ (sum(spam_sum) + numTokens);

phi_k_y0 = (nonspam_sum + 1) ./ (sum(nonspam_sum) + numTokens);

% Test set

test_features = dlmread('test-features.txt');

spmatrix = sparse(test_features(:,1), test_features(:,2),test_features(:,3));

test_matrix = full(spmatrix);

numTestDocs = size(test_matrix, 1);

% Calculate probability

prob_spam = log(test_matrix * phi_k_y1') + log(phi_y);

prob_nonspam = log(test_matrix * phi_k_y0') + log(1 - phi_y);

output = prob_spam > prob_nonspam;

% Read the correct labels of the test set

test_labels = dlmread('test-labels.txt');

% Compute the error on the test set

% A document is misclassified if it's predicted label is different from

% the actual label, so count the number of 1's from an exclusive "or"

numdocs_wrong = sum(xor(output, test_labels))

%Print out error statistics on the test set

error = numdocs_wrong/numTestDocs<pre name="code" class="plain">log_a = test_matrix*(log(prob_tokens_spam))' + log(prob_spam);

log_b = test_matrix*(log(prob_tokens_nonspam))'+ log(1 - prob_spam);  ,即对于每一个特征单词求总的概率

,即对于每一个特征单词求总的概率

log_a = log(test_matrix * prob_tokens_spam') + log(prob_spam);

log_b = log(test_matrix * prob_tokens_nonspam')+ log(1 - prob_spam); 特征单词提取

clc;clear

% This file extracts features of emails for judging whether an email is a

% spam or not.

%% Read all text files in cell variable 'data'

data = cell(0);

directory = dir('.');

numberDirect = length(directory);

for n = 3 : numberDirect

files = dir(directory(n).name);

numberFiles = length(files);

for i = 3 : numberFiles

% Be careful the path

fid = fopen(['.\', directory(n).name, '\', files(i).name]);

if (-1 == fid)

fclose(fid);

continue;

end

dataTemp = textscan(fid, '%s', '\n');

fclose(fid);

data = [data; dataTemp{1, 1}];

end

end

%% Sort the data by alphabet.

data = sort(data);

% Count occurrences and delete duplicate words and store in a struct variable.

numberStrings = length(data);

words = struct('strings', {}, 'occurrences', 0);

numberFeature = 1;

occurrences = 1;

for i = 1 : numberStrings - 1

if (strcmp(char(data(i)), char(data(i + 1))))

occurrences = occurrences + 1;

else

words(numberFeature).strings = char(data(i));

words(numberFeature).occurrences = occurrences;

numberFeature = numberFeature + 1;

occurrences = 1;

end

end

words = struct2cell(words);

%% This is only for testing, or you can use

% 'sortrows(cell2mat(words(2, 1, :)))' for getting the 2500 most words.

orders = ones(numberFeature - 1, 1);

for i = 2 : numberFeature - 1

orders(i) = orders(i) + orders(i - 1);

end

features_number = cell2mat(words(2, 1, :));

features_numbers = [features_number(:), orders];

%% Get the 2500 most words to generate dictionary

features_numbers = sortrows(features_numbers);

directionary = words(:, :, features_numbers(:, 2));

directionary = directionary(1, 1, end - 2500 : end - 1);

directionary = sort(directionary(:));

%% calculate features in all folders for trainset and testset

for n = 3 : numberDirect

files = dir(directory(n).name);

numberFiles = length(files);

for i = 3 : numberFiles

fid = fopen(['.\', directory(n).name, '\', files(i).name]);

if (-1 == fid)

fclose(fid);

continue;

end

dataTemp = textscan(fid, '%s', '\n');

fclose(fid);

data = dataTemp{1,1};

feature = find_indexandcount(data, directionary);

docnumber = (i - 2) * ones(size(feature, 1), 1);

featrues = [docnumber, feature];

if (3 == i)

dlmwrite(['.\', directory(n).name, '\', 'features.txt'], featrues, 'delimiter', ' ');

else

dlmwrite(['.\', directory(n).name, '\', 'features.txt'], featrues, '-append', 'delimiter', ' ');

end

end

endfunction result = find_indexandcount(email, dictionary)

% This function computes words' index and count in an email by a dictionary.

numberStrings = length(email);

numberWords = length(dictionary);

count = zeros(length(dictionary), 1);

for i = 1 : numberStrings

count = count + strcmp(email(i), dictionary);

end

% Record order sequence

orders = ones(numberWords, 1);

for i = 2 : length(dictionary) - 1

orders(i) = orders(i) + orders(i - 1);

end

result = sortrows([orders, count], 2);

% Find words which appear more than 1 times

reserve = find(result(:, 2));

result = result(reserve, :);

end% train.m

% Exercise 6: Naive Bayes text classifier

clear all; close all; clc

% store the number of training examples

numTrainDocs = 350;

% store the dictionary size

numTokens = 2500;

% read the features matrix

M0 = dlmread('nonspam_features_train.txt', ' ');

M1 = dlmread('spam_features_train.txt', ' ');

nonspmatrix = sparse(M0(:,1), M0(:,2), M0(:,3), numTrainDocs, numTokens);

nonspamtrain_matrix = full(nonspmatrix);

spmatrix = sparse(M1(:,1), M1(:,2), M1(:,3), numTrainDocs, numTokens);

spamtrain_matrix = full(spmatrix);

% Calculate probability of spam

phi_y = size(spamtrain_matrix, 1) / (size(spamtrain_matrix, 1) + size(nonspamtrain_matrix, 1));

spam_sum = sum(spamtrain_matrix);

nonspam_sum = sum(nonspamtrain_matrix);

% the k-th entry of prob_tokens_spam represents phi_(k|y=1)

phi_k_y1 = (spam_sum + 1) ./ (sum(spam_sum) + numTokens);

% the k-th entry of prob_tokens_nonspam represents phi_(k|y=0)

phi_k_y0 = (nonspam_sum + 1) ./ (sum(nonspam_sum) + numTokens);

% Test set

test_features_spam = dlmread('spam_features_test.txt',' ');

test_features_nonspam = dlmread('nonspam_features_test.txt',' ');

numTestDocs = max(test_features_spam(:,1));

spmatrix = sparse(test_features_spam(:,1), test_features_spam(:,2),test_features_spam(:,3),numTestDocs, numTokens);

nonspmatrix = sparse(test_features_nonspam(:,1), test_features_nonspam(:,2),test_features_nonspam(:,3),numTestDocs,numTokens);

test_matrix = [full(spmatrix); full(nonspmatrix)];

% Calculate probability

prob_spam = log(test_matrix * phi_k_y1') + log(phi_y);

prob_nonspam = log(test_matrix * phi_k_y0') + log(1 - phi_y);

output = prob_spam > prob_nonspam;

% Compute the error on the test set

test_labels = [ones(numTestDocs, 1); zeros(numTestDocs, 1)];

wrong_numdocs = sum(xor(output, test_labels))

%Print out error statistics on the test set

error_prob = wrong_numdocs/numTestDocs

其结果如下:(分类错误的个数及概率)

智能推荐

艾美捷Epigentek DNA样品的超声能量处理方案-程序员宅基地

文章浏览阅读15次。空化气泡的大小和相应的空化能量可以通过调整完全标度的振幅水平来操纵和数字控制。通过强调超声技术中的更高通量处理和防止样品污染,Epigentek EpiSonic超声仪可以轻松集成到现有的实验室工作流程中,并且特别适合与表观遗传学和下一代应用的兼容性。Epigentek的EpiSonic已成为一种有效的剪切设备,用于在染色质免疫沉淀技术中制备染色质样品,以及用于下一代测序平台的DNA文库制备。该装置的经济性及其多重样品的能力使其成为每个实验室拥有的经济高效的工具,而不仅仅是核心设施。

11、合宙Air模块Luat开发:通过http协议获取天气信息_合宙获取天气-程序员宅基地

文章浏览阅读4.2k次,点赞3次,收藏14次。目录点击这里查看所有博文 本系列博客,理论上适用于合宙的Air202、Air268、Air720x、Air720S以及最近发布的Air720U(我还没拿到样机,应该也能支持)。 先不管支不支持,如果你用的是合宙的模块,那都不妨一试,也许会有意外收获。 我使用的是Air720SL模块,如果在其他模块上不能用,那就是底层core固件暂时还没有支持,这里的代码是没有问题的。例程仅供参考!..._合宙获取天气

EasyMesh和802.11s对比-程序员宅基地

文章浏览阅读7.7k次,点赞2次,收藏41次。1 关于meshMesh的意思是网状物,以前读书的时候,在自动化领域有传感器自组网,zigbee、蓝牙等无线方式实现各个网络节点消息通信,通过各种算法,保证整个网络中所有节点信息能经过多跳最终传递到目的地,用于数据采集。十多年过去了,在无线路由器领域又把这个mesh概念翻炒了一下,各大品牌都推出了mesh路由器,大多数是3个为一组,实现在面积较大的住宅里,增强wifi覆盖范围,智能在多热点之间切换,提升上网体验。因为节点基本上在3个以内,所以mesh的算法不必太复杂,组网形式比较简单。各厂家都自定义了组_802.11s

线程的几种状态_线程状态-程序员宅基地

文章浏览阅读5.2k次,点赞8次,收藏21次。线程的几种状态_线程状态

stack的常见用法详解_stack函数用法-程序员宅基地

文章浏览阅读4.2w次,点赞124次,收藏688次。stack翻译为栈,是STL中实现的一个后进先出的容器。要使用 stack,应先添加头文件include<stack>,并在头文件下面加上“ using namespacestd;"1. stack的定义其定义的写法和其他STL容器相同, typename可以任意基本数据类型或容器:stack<typename> name;2. stack容器内元素的访问..._stack函数用法

2018.11.16javascript课上随笔(DOM)-程序员宅基地

文章浏览阅读71次。<li> <a href = "“#”>-</a></li><li>子节点:文本节点(回车),元素节点,文本节点。不同节点树: 节点(各种类型节点)childNodes:返回子节点的所有子节点的集合,包含任何类型、元素节点(元素类型节点):child。node.getAttribute(at...

随便推点

layui.extend的一点知识 第三方模块base 路径_layui extend-程序员宅基地

文章浏览阅读3.4k次。//config的设置是全局的layui.config({ base: '/res/js/' //假设这是你存放拓展模块的根目录}).extend({ //设定模块别名 mymod: 'mymod' //如果 mymod.js 是在根目录,也可以不用设定别名 ,mod1: 'admin/mod1' //相对于上述 base 目录的子目录}); //你也可以忽略 base 设定的根目录,直接在 extend 指定路径(主要:该功能为 layui 2.2.0 新增)layui.exten_layui extend

5G云计算:5G网络的分层思想_5g分层结构-程序员宅基地

文章浏览阅读3.2k次,点赞6次,收藏13次。分层思想分层思想分层思想-1分层思想-2分层思想-2OSI七层参考模型物理层和数据链路层物理层数据链路层网络层传输层会话层表示层应用层OSI七层模型的分层结构TCP/IP协议族的组成数据封装过程数据解封装过程PDU设备与层的对应关系各层通信分层思想分层思想-1在现实生活种,我们在喝牛奶时,未必了解他的生产过程,我们所接触的或许只是从超时购买牛奶。分层思想-2平时我们在网络时也未必知道数据的传输过程我们的所考虑的就是可以传就可以,不用管他时怎么传输的分层思想-2将复杂的流程分解为几个功能_5g分层结构

基于二值化图像转GCode的单向扫描实现-程序员宅基地

文章浏览阅读191次。在激光雕刻中,单向扫描(Unidirectional Scanning)是一种雕刻技术,其中激光头只在一个方向上移动,而不是来回移动。这种移动方式主要应用于通过激光逐行扫描图像表面的过程。具体而言,单向扫描的过程通常包括以下步骤:横向移动(X轴): 激光头沿X轴方向移动到图像的一侧。纵向移动(Y轴): 激光头沿Y轴方向开始逐行移动,刻蚀图像表面。这一过程是单向的,即在每一行上激光头只在一个方向上移动。返回横向移动: 一旦一行完成,激光头返回到图像的一侧,准备进行下一行的刻蚀。

算法随笔:强连通分量-程序员宅基地

文章浏览阅读577次。强连通:在有向图G中,如果两个点u和v是互相可达的,即从u出发可以到达v,从v出发也可以到达u,则成u和v是强连通的。强连通分量:如果一个有向图G不是强连通图,那么可以把它分成躲个子图,其中每个子图的内部是强连通的,而且这些子图已经扩展到最大,不能与子图外的任一点强连通,成这样的一个“极大连通”子图是G的一个强连通分量(SCC)。强连通分量的一些性质:(1)一个点必须有出度和入度,才会与其他点强连通。(2)把一个SCC从图中挖掉,不影响其他点的强连通性。_强连通分量

Django(2)|templates模板+静态资源目录static_django templates-程序员宅基地

文章浏览阅读3.9k次,点赞5次,收藏18次。在做web开发,要给用户提供一个页面,页面包括静态页面+数据,两者结合起来就是完整的可视化的页面,django的模板系统支持这种功能,首先需要写一个静态页面,然后通过python的模板语法将数据渲染上去。1.创建一个templates目录2.配置。_django templates

linux下的GPU测试软件,Ubuntu等Linux系统显卡性能测试软件 Unigine 3D-程序员宅基地

文章浏览阅读1.7k次。Ubuntu等Linux系统显卡性能测试软件 Unigine 3DUbuntu Intel显卡驱动安装,请参考:ATI和NVIDIA显卡请在软件和更新中的附加驱动中安装。 这里推荐: 运行后,F9就可评分,已测试显卡有K2000 2GB 900+分,GT330m 1GB 340+ 分,GT620 1GB 340+ 分,四代i5核显340+ 分,还有写博客的小盒子100+ 分。relaybot@re...